Abstract

Recent interactive video world model methods generate scene evolution conditioned on user instructions. Although they achieve impressive results, two key limitations remain. First, they exhibit motion drift in complex environments with multiple interacting subjects, where dynamic subjects fail to follow realistic motion patterns during scene evolution. Second, they suffer from error accumulation in long-horizon interactions, where autoregressive generation gradually drifts from earlier scene states and causes structural and semantic inconsistencies. In this paper, we propose MagicWorld, an interactive video world model built upon an autoregressive framework. To address motion drift, we incorporate a flow-guided motion preservation constraint that mitigates motion degradation in dynamic subjects, encouraging realistic motion patterns and stable interactions during scene evolution. To mitigate error accumulation in long-horizon interactions, we design two complementary strategies, including a history cache retrieval strategy and an enhanced interactive training strategy. The former reinforces historical scene states by retrieving past generations during interaction, while the latter adopts multi-shot aggregated distillation with dual-reward weighting for interactive training, enhancing long-term stability and reducing error accumulation. In addition, we construct RealWM120K, a real-world dataset with diverse city-walk videos and multimodal annotations to support dynamic perception and long-horizon world modeling. Experimental results demonstrate that MagicWorld improves motion realism and alleviates error accumulation during long-horizon interactions.

Method

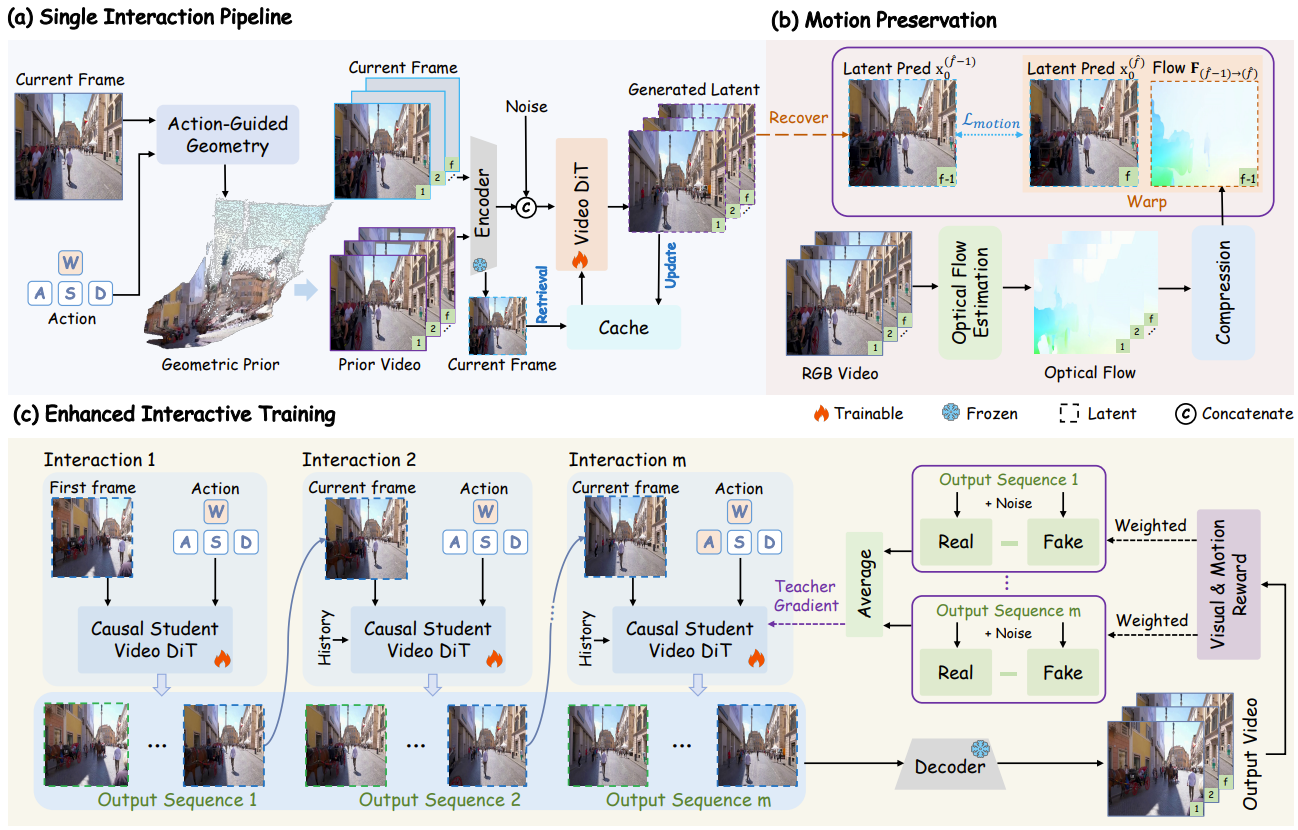

Overview of MagicWorld. (a) Single interaction pipeline with history cache retrieval. (b) Flow-guided motion preservation enforces temporal coherence in dynamic regions. (c) Enhanced interactive training with multi-shot aggregated reward distribution matching distillation, jointly optimizing multi-step rollouts with visual and motion rewards to improve motion realism and mitigate error accumulation.